With Version 0.3.0 up, deployctl is no longer a gitlab-runner helper, but a new dedicated runner for static deployments.

Read further about the reasons for this development.

Motivation:

During the development of deployctl, deployctl was heavily used as the system to auto deploy releases/branches for testing and releases, and during that development it became apparent that we needed a specific runner for a couple of reasons.

security:

Since deployctl strives for key-/password-/token-less deployment, the runner is located on the web-server, where as deployctl configures the web-server and put’s the content in the right place.

This called for the use of a gitlab-ci-multi-runner with a shell executer, causing a real concern regarding security in terms of abuse/misuse of the gitlab-ci-token and unauthorized project content access, through stolen token and/or accessing data outside the project-dir.

deployctl runner does not run user scripts directly in bash, only deployctl commands are sent to the deployctl command processor and

any non deployctl commands are simply ignored, depriving the script access to bash execution and thus the system, while allowing custom content locations by means of special variables:

- DEPLOY_PUBLISH_PATH : path(s) to content for

staticdeployment - DEPLOY_REPO_PATH: path to location of the directories rpm and deb

- DEPLOY_RELEASE_PATH: path(s) to the release content

These variables can contain bash substitutions and our variables.

better access-control protection for deployctl:

Access control of the deployments is done through the repository name and when using gitlab-runner all variables are thrown into a bash shell, making it impossible to distinguish between real and fraud namespace.

With the deployctl-runner, we have access to the full job, that provides info asides from the CI-variables, making it a full-proof solution.

needs to run as privileged user

Gitlab-runner is started as a servive with that needs root privileges to protect the runner configuration from a shell runner. But it also means that we can not run gitlab on a docker system (openshift/kube) that does not allow privileged containers.

deployctl runner, runs as a non privileged user, with a sudo (non password exception) to reload and config-test for nginx, allowing it to run as an un-privileged container.

For that same reason deployctl switched from certbot to the acme.sh client for auto https configurations with Let's Encrypt, that is till we have a c-lib to take care of the acme requests as the downside of acme.sh is quiet a verbose output.

Clean projectdir on start deployment.

When using Gitstrategy: none in the .gitlab-ci.yml, and only using job artifacts as the deployment source, the project-dir does not get cleaned causing deleted files still to be present in a new deployment.

Furthermore, on a shell runner, there is no work around to get it cleaned before the artifacts are downloaded, making it necessary to add some extra annoying scripting:

e.g. when artifacts provides the content in output/, we need to:

rm -rf newoutput_dir

mkdir newoutput_dir

cp -r output/ newoutput_dir/

and this for every deployment to ensure parts of the previous release is not present in the new release. see gitlab-issue #25275 and gitlab-issue #27587

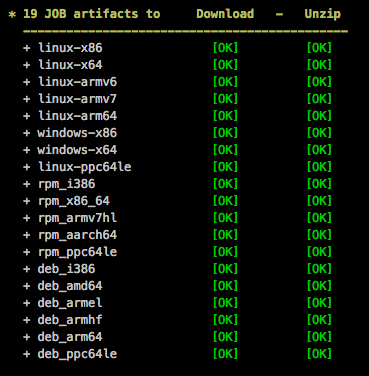

deployctl runner always starts with a fresh and clean project-dir. A choice is made as deployctl runner only does deployments of output and thus mostly the source for the deployment is provided by artifacts so it does not need long git clone for large repositories.

Clean project-dir on finish deployment.

as per previous point, data is left behind by the gitlab-runner, with no means to delete, if one would need artifacts to be produced.

deployctl runner always cleans the project-dir, not leaving any data behind other then the intended release in the deployment locations.

Build status: Pending

When we use a server with more then one runner registered, runners check for new jobs sequentially, with a mechanisms to backup of, unfortunately this back-off mechanism has an effect on the healthy gitlab-instances, causing an delay of deployment and status Pending, up to 60 sec.

deployctl runner has a back-off system / reduced contact on errors per registered runner!

Secondly since Gitlab 9.xx, long requests have been introduced to reduce the database load/ API polling request of runners. Essentially a very nice solution that blocks a request for a job up to 50 seconds and returns immediately with a job if a job is available. This works kinda like a semi polling/pushing, which has superb improvement on timing of starting a runner very quickly without the overload of very frequent poll’s.

But, it does not work when more then one runner is registered on a server as it leads up to 50 seconds delay per registered runner on the server, as the multi-runner polls sequentially one by one.

From 0.3.2 deployctl runner enables long-polling in multiple threads per registered runner, with a maximum of 8 registered runners and per runner individual back-off timing.

This results in a very fast start of new builds, where as deployctl put locks in place for deployment (web-config and repositories for RPM/apt should only executed one job at a time.)

To enable long polling add to /etc/gitlab/config.rb on your private repository:

gitlab_workhorse['api_ci_long_polling_duration'] = "60s"

Cancel Job

Basically a cancel job does not work in Gitlab once the job has started or on a docker build after the authorization of the docker-image has been done.

Furthermore there is no way for a runner to known when a job has been cancelled till the moment the runner announces that the job has Failed or succeeded. By using the gitlab-runner, there is nothing in place to take action, like remove the new deployment and putting the previous deployment back in place. (One can check this by refreshing the build trace after canceling the job or pipeline.)

What deployctl runner could do is when receiving a cancel notification on a successful or failed job, to put the previous deployment, if there was on back in place.

Remark not implemented yet at this moment.(0.3.4)

No color output

prior to 0.3.0, when using gitlab-runner all commands are executed with a script with a stdout redirection, resulting in a plain white output, making error’s and warnings difficult to spot.

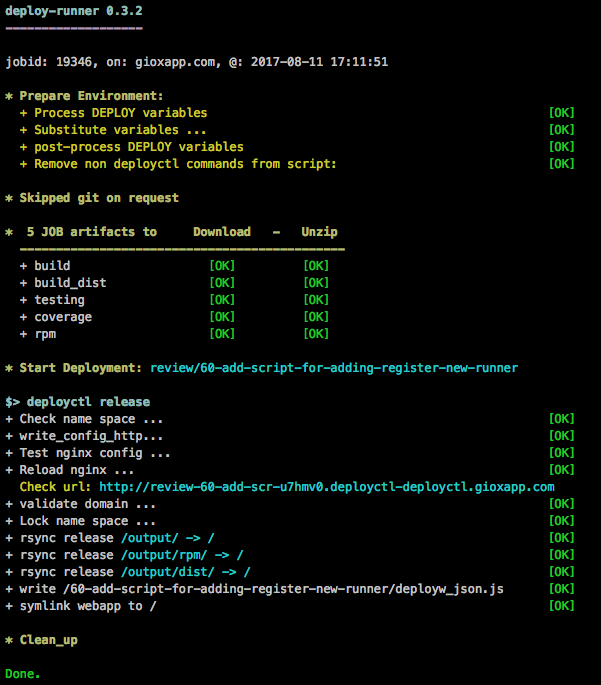

With 0.3.0 onwards, by integrating the runner into deployctl, we can display colors:

very anoying trace output:

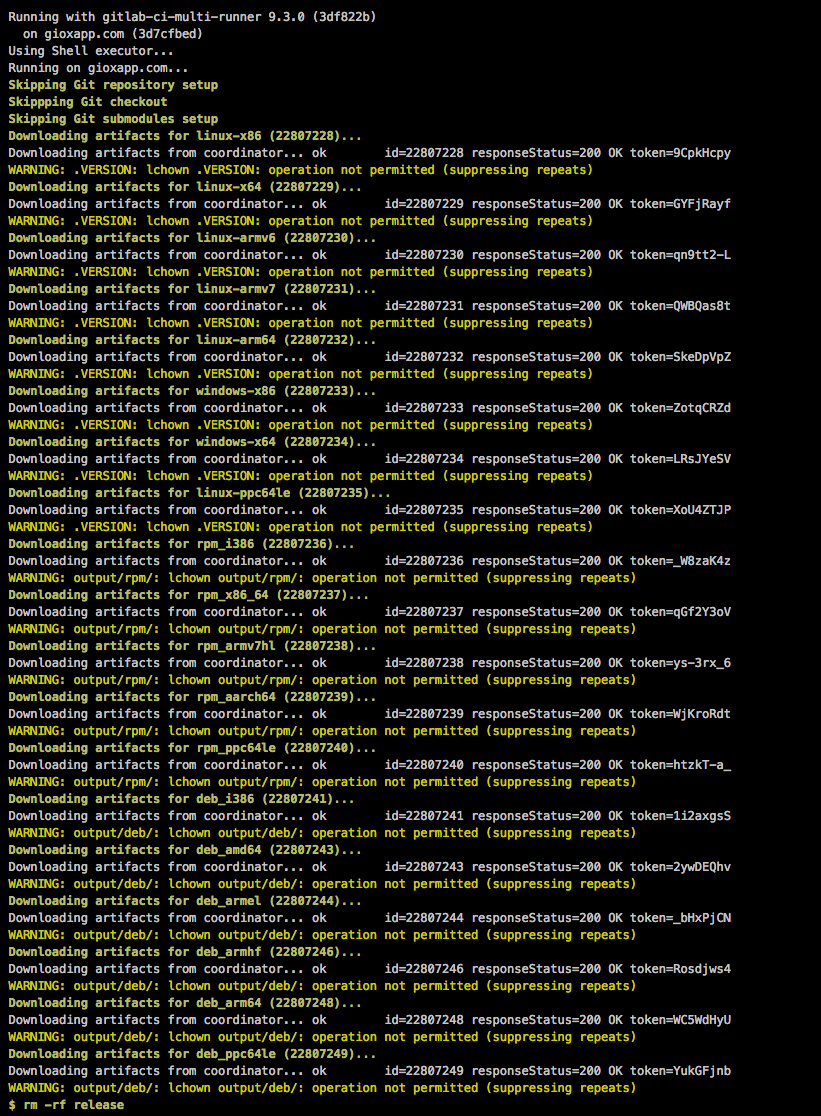

When artifacts are downloaded, they are unzipped, preserving the file attributes. Meaning if the content was build in a docker container, artifacts are zipped with the file-attributes (owner), pulling the artifacts on a shell runner, where a non privileged user tries to get ownership of those files results in:

extract from build heloworld_c with gitlab-runner: